AI BYOM & Cloud Routing

OpenNVR fundamentally separates continuous recording from analytics. By leveraging a stateless AI adapter architecture, you can wire video frames dynamically to local GPU models or external Cloud APIs without risking the stability of the core NVR engine.

Local Edge Adapters

(Privacy-First)

(Privacy-First)

Executing models locally keeps inference data on your own hardware and avoids recurring cloud inference costs. The ai-adapters container sits on the internal Docker network and listens for inference routing requests from the NVR core.

Deployment Steps:

- Securely shell into your NVR host and locate the

docker-compose.yml. - Ensure the

ai-adaptersservice is active and theADAPTER_URLenvironment variable is explicitly injected intoopennvr-core:- ADAPTER_URL=http://opennvr_ai:9100 - Rebuild the orchestration layer:

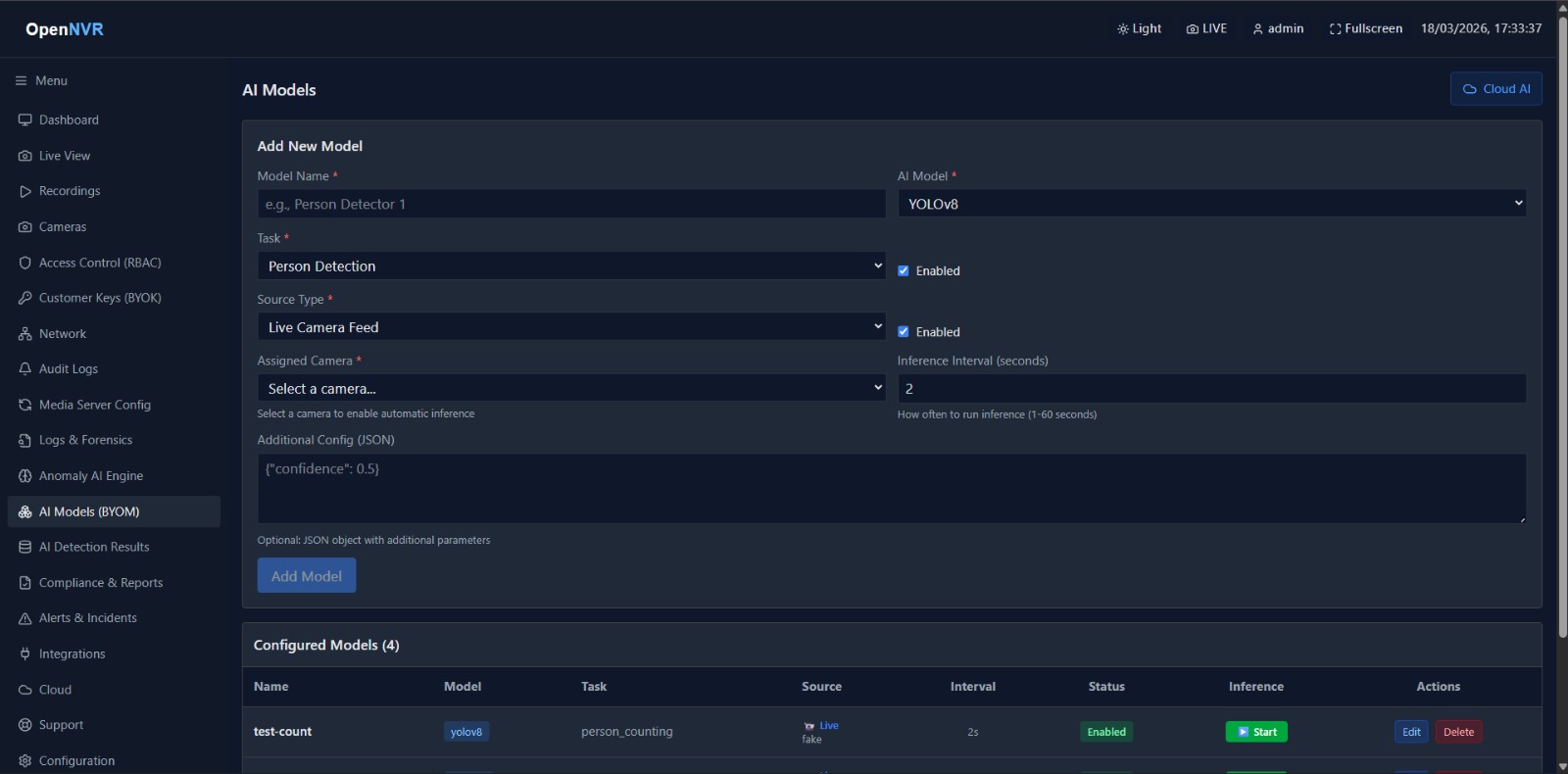

docker compose up -d ai-adapters - Navigate to AI Models (BYOM) in the GUI. You can now select and bind local models (e.g., YOLO, InsightFace) directly to individual tracking pipelines.

Cloud Ecosystems

(Hugging Face)

(Hugging Face)

If your enterprise requires massive, state-of-the-art Visual Language Models (VLMs) or massive tensor architectures that exceed local GPU VRAM, OpenNVR natively routes to Hugging Face.

Secure Token Injection (Recommended)

- Generate a strict Read-Only endpoint token via your Hugging Face Security Settings.

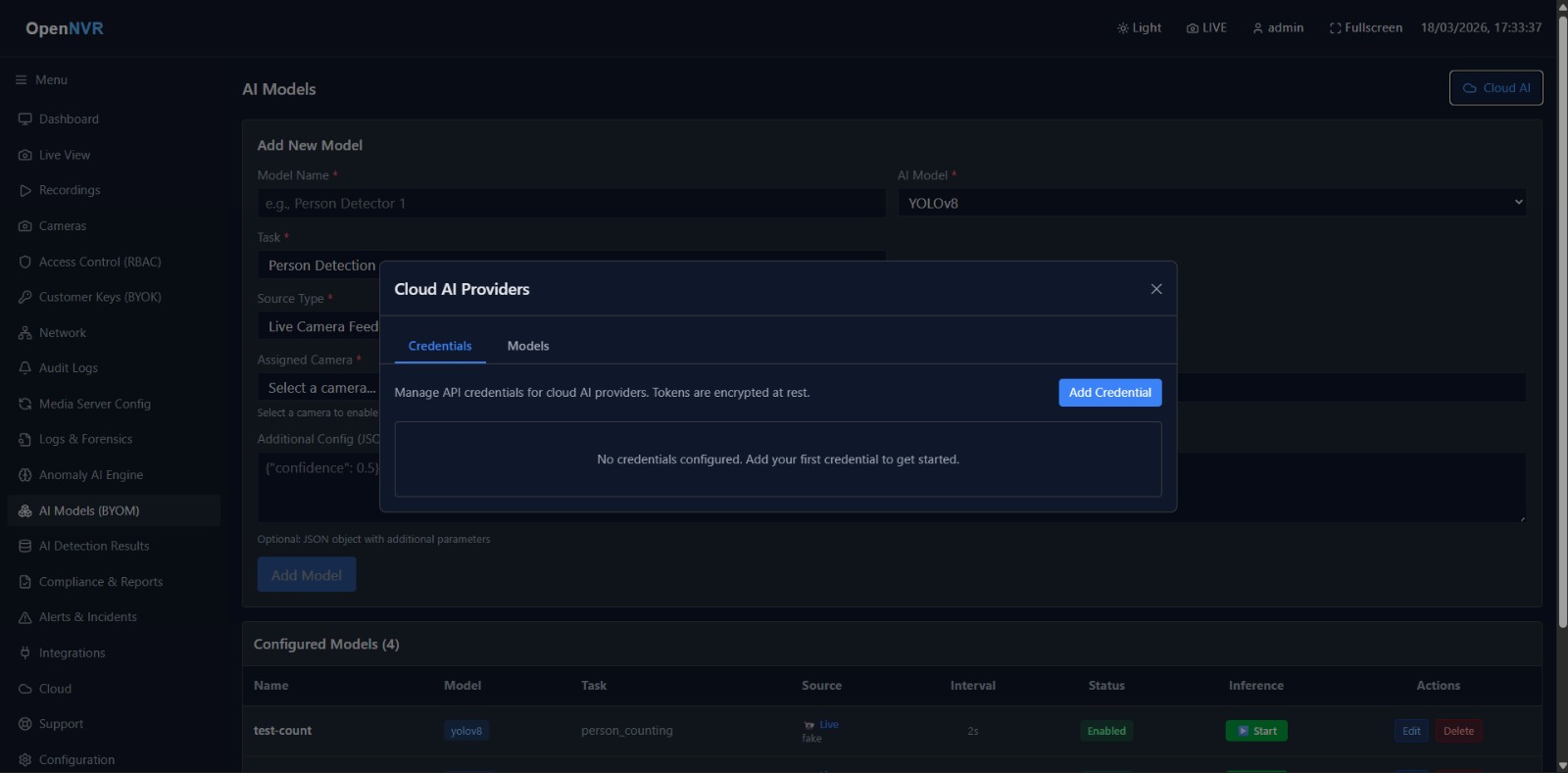

- Within the OpenNVR UI, navigate to Cloud Models / BYOM and select the Hugging Face provider.

- Inject the token and save the configuration. The FastAPI core will now securely proxy inference requests to the cloud ecosystem.

- Enter the exact Model ID (e.g.,

google/vit-base-patch16-224) and enable the pipeline.

Hardcoded Environmental Injection

For deployment environments driven entirely by Infrastructure as Code (IaC), you can skip UI token injection by hardcoding the token natively inside your Compose manifests:

services:

ai-adapters:

environment:

- HF_TOKEN=hf_your_generated_token_hereSystem Troubleshooting

- DNS Resolution Failures: If the core cannot reach the adapter, verify Bridge Mode DNS.

opennvr_coremust be able to pingopennvr_ai:9100. - Token Rejection: HTTP 401s from Cloud APIs exclusively indicate an invalid or expired API token. Ensure your Hugging Face token is active.

- Adapter Stack-Traces: Actively monitor the Python adapter logs during inference drops:

docker compose logs -f ai-adapters